|

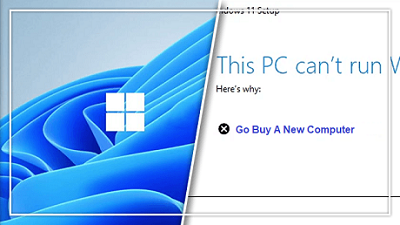

In a new FAQ, Apple has attempted to assuage concerns that its new anti-child abuse measures could be turned into surveillance tools by authoritarian governments. “Let us be clear, this technology is limited to detecting CSAM [child sexual abuse material] stored in iCloud and we will not accede to any government’s request to expand it,” the company writes. Apple’s new tools, announced last Thursday, include two features designed to protect children. One, called “communication safety,” uses on-device machine learning to identify and blur sexually explicit images received by children in the Messages app, and can notify a parent if a child age 12 and younger decides to view or send such an image. The second is designed to detect known CSAM by scanning users’ images if they choose to upload them to iCloud. Apple is notified if CSAM is detected, and it will alert the authorities when it verifies such material exists. The plans met with a swift backlash from digital privacy groups and campaigners, who argued that these introduce a backdoor into Apple’s software. These groups note that once such a backdoor exists there is always the potential for it to be expanded to scan for types of content that go beyond child sexual abuse material. Authoritarian governments could use it to scan for politically dissent material, or anti-LGBT regimes could use it to crack down on sexual expression. “Even a thoroughly documented, carefully thought-out, and the narrowly-scoped backdoor is still a backdoor,” the Electronic Frontier Foundation wrote. “We’ve already seen this mission creep in action. One of the technologies originally built to scan and hash child sexual abuse imagery has been repurposed to create a database of ‘terrorist’ content that companies can contribute to and access for the purpose of banning such content.” However, Apple argues that it has safeguards in place to stop its systems from being used to detect anything other than sexual abuse imagery. It says that its list of banned images is provided by the National Center for Missing and Exploited Children (NCMEC) and other child safety organizations and that the system “only works with CSAM image hashes provided by NCMEC and other child safety organizations.” Apple says it won’t add to this list of image hashes, and that the list is the same across all iPhones and iPads to prevent individual targeting of users. The company also says that it will refuse demands from governments to add non-CSAM images to the list. “We have faced demands to build and deploy government-mandated changes that degrade the privacy of users before, and have steadfastly refused those demands. We will continue to refuse them in the future,” it says. It’s worth noting that despite Apple’s assurances, the company has made concessions to governments in the past in order to continue operating in their countries. It sells iPhones without FaceTime in countries that don’t allow encrypted phone calls, and in China, it’s removed thousands of apps from its App Store, as well as moved to store user data on the servers of a state-run telecom. The FAQ also fails to address some concerns about the feature that scans Messages for sexually explicit material. The feature does not share any information with Apple or law enforcement, the company says, but it doesn’t say how it’s ensuring that the tool’s focus remains solely on sexually explicit images. “All it would take to widen the narrow backdoor that Apple is building is an expansion of the machine learning parameters to look for additional types of content, or a tweak of the configuration flags to scan, not just children’s, but anyone’s accounts,” wrote the EFF. The EFF also notes that machine-learning technologies frequently classify this content incorrectly, and cites Tumblr’s attempts to crack down on sexual content as a prominent example of where the technology has gone wrong. Follow this and more on OUR FORUM. Every Microsoft announcement brings a lot of excitement, expectation, and few intriguing debates. On June 24th, 2021, Microsoft launched the ambitious all-new Windows 11. However, it is still not officially available to install. Though Windows 11’s first preview is available for the users of the Windows Insider Program, it would take almost 6-8 more months for a stable version to come for your PC. However, since users are eagerly looking forward to Windows 11, Microsoft has provided a way to check whether your current machine is compatible with the Windows 11 or not so that users can upgrade their system accordingly. For that, you can use the PC Health Check application. While checking the Windows 11 compatibility for their device, many users are getting the error warning “This PC can’t run Windows 11” that tells them that their device is incompatible with Windows 11 because of various reasons. It is fair that the warning is appearing on the older devices which might not have the hardware capabilities to run Windows 11, such as the device with Trusted Platform Module (TPM) lower than the 2.0 version. However, the thing that is weird and unacceptable is that the error is appearing on comparatively new devices too, which fulfills the minimum system requirements. This forced us to ponder whether Microsoft wants us to upgrade to the latest Windows 11 or not? The main reason for that can be security. With Windows 11, Microsoft is shifting its focus more on security and privacy, just like Apple. Windows OS are traditionally prone to malware attacks, and with Windows 11, Microsoft is looking for a new beginning to compete with Apple, at least on security and privacy levels. That’s why they don’t want Windows 11 to be installed on the device with old generation processors, even if they meet the minimum requirements. Another reason could be Microsoft might want to limit the existing PCs to run Windows 11. Since it is a new OS that might contain a few bugs, Microsoft might roll it out for other compatible PCs after it gains initial success. If they roll it out for everyone, many users will experience bugs that can have a negative impact on Windows 11 marketing. Microsoft does not want to repeat the same mistake that ruined the reputation of Windows 10. There could also be a bigger picture to this. It is speculated that Microsoft is limiting Windows 11 to the latest device and doesn’t want older devices to upgrade to it because they want people to buy new devices with Windows 11 enabled on them. There is a big valid reason why Microsoft might intend to do that. If you upgrade your old Windows 10 devices to Windows 11, Microsoft will gain nothing as Windows 10 users would get a free upgrade to Windows 11. But, if you buy a new device, Microsoft would earn money for the Windows 11 OS installed on it. Follow this and more by visiting OUR FORUM. There are lots of reasons to upgrade to the preview version of Windows 11, but that doesn't mean you have to live with all aspects of the new user interface. Perhaps, like me, you don't like the new Start Menu because it takes up so much space. Or maybe you hate the fact that File Explorer is missing a ribbon menu or that right click menus only hold 7 options and force you to click "Show more options" to see them all. The good news is that, with a combination of registry tweaks, third-party apps and some different art work, you can get some of the look and feel of Windows 10 back in Windows 11. The bad news is that Microsoft doesn't seem to want you to go back to a previous UI so it may disable any registry hacks you use in future updates. And these are hacks for a frequently-changing beta OS so there's no guarantee you won't run into bugs; proceed at your own risk. Below, we'll outline a number of tweaks for different parts of the UI and you can use one, several, or all of them to get the look you want. Get a More Windows 10-Like Start Menu Read more on our Forum |

Latest Articles

|